-

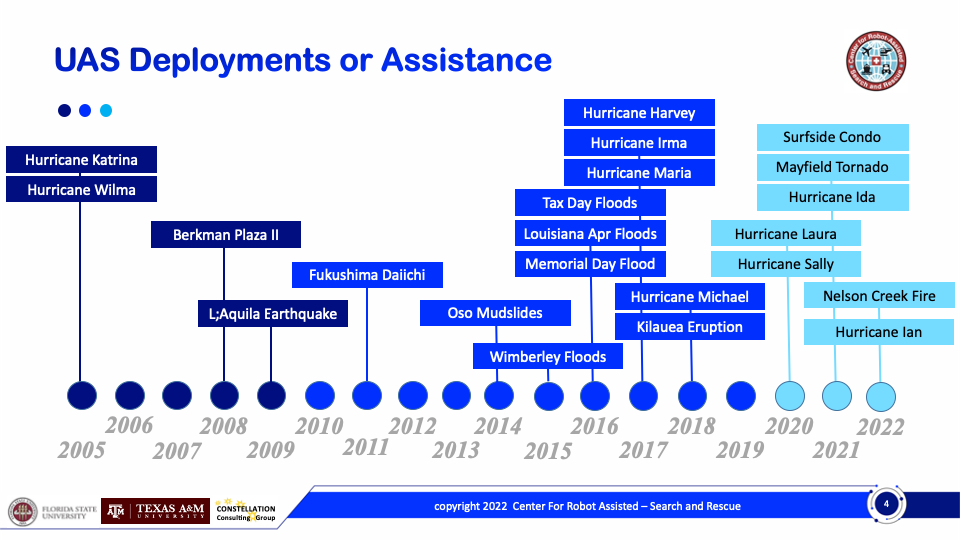

22 Drones Deployments or Assists Since 2005

Posted by Robin Murphy on Dec 06, 2022 at 6:14 am America/Chicago

Since 2005, when we had the first deployment of small...READ MORE

-

Flying Small UAS for Fooding in Rural and Mountainous Areas

Posted by Robin Murphy on Sep 18, 2018 at 12:34 pm America/Chicago

These are some of the lessons learned by the CRASAR...READ MORE

-

NBC Nightly News Feature on CRASAR “Robot rescue: Behind the technology deployed for disaster relief”

Posted by Robin Murphy on Aug 27, 2018 at 1:26 pm America/Chicago

NBC Nightly News on Aug. 28, 2018, featured CRASAR deployed...READ MORE

-

Six Lessons for Unmanned Systems at Wildfires

Posted by Robin Murphy on Aug 24, 2018 at 11:00 am America/Chicago

As the wildfire seasons burns on, here are 6 lessons...READ MORE

-

Two Notes About Flying Small UAS for Wildfires

Posted by Robin Murphy on Aug 06, 2018 at 5:14 pm America/Chicago

The California wildfires have generated significant interest in small UAS....READ MORE

-

Best Practices for Small Unmanned Aerial Systems for Floods Now Available!

Posted by Joan Quintana on Jun 21, 2018 at 8:10 am America/Chicago

With the extreme weather throughout the US, we’ve put together...READ MORE

Tags

- alpha geek

- asimov

- caterpillars

- collaboration

- cologne

- delft

- disaster reponse

- disaster response

- earthquake

- ethics

- firefighting

- ft hood

- Haiti Earthquake

- hawaii volcano

- Kobe Earthquake

- public safety

- rescue robots

- robocup

- robotics

- robotics rodeo

- snakes

- uav

- ugv

- UMV

- volcano eruption

- wired

- World Trade Center

Archives

- December 2022

- September 2018

- August 2018

- June 2018

- May 2018

- April 2018

- January 2018

- December 2017

- September 2017

- August 2017

- May 2017

- April 2017

- March 2017

- November 2016

- October 2016

- September 2016

- August 2016

- June 2016

- April 2016

- March 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- July 2014

- June 2014

- May 2014

- April 2014

- March 2014

- February 2014

- December 2013

- November 2013

- October 2013

- September 2013

- August 2013

- July 2013

- June 2013

- May 2013

- April 2013

- March 2013

- February 2013

- January 2013

- December 2012

- November 2012

- October 2012

- September 2012

- August 2012

- July 2012

- June 2012

- May 2012

- April 2012

- March 2012

- January 2012

- December 2011

- November 2011

- October 2011

- August 2011

- June 2011

- May 2011

- April 2011

- March 2011

- February 2011

- January 2011

- December 2010

- November 2010

- October 2010

- September 2010

- July 2010

- May 2010

- April 2010

- February 2010

- January 2010

- September 2009

- August 2009

- July 2009

- June 2009

- April 2009

- March 2009

Our Sponsors