-

Lessons Learned: Deploying UAVs for Volcano Eruption Response

Posted by Joan Quintana on Jun 11, 2018 at 10:30 pm America/Chicago

Having recently supported the response to Hawaii volcano eruption at...READ MORE

-

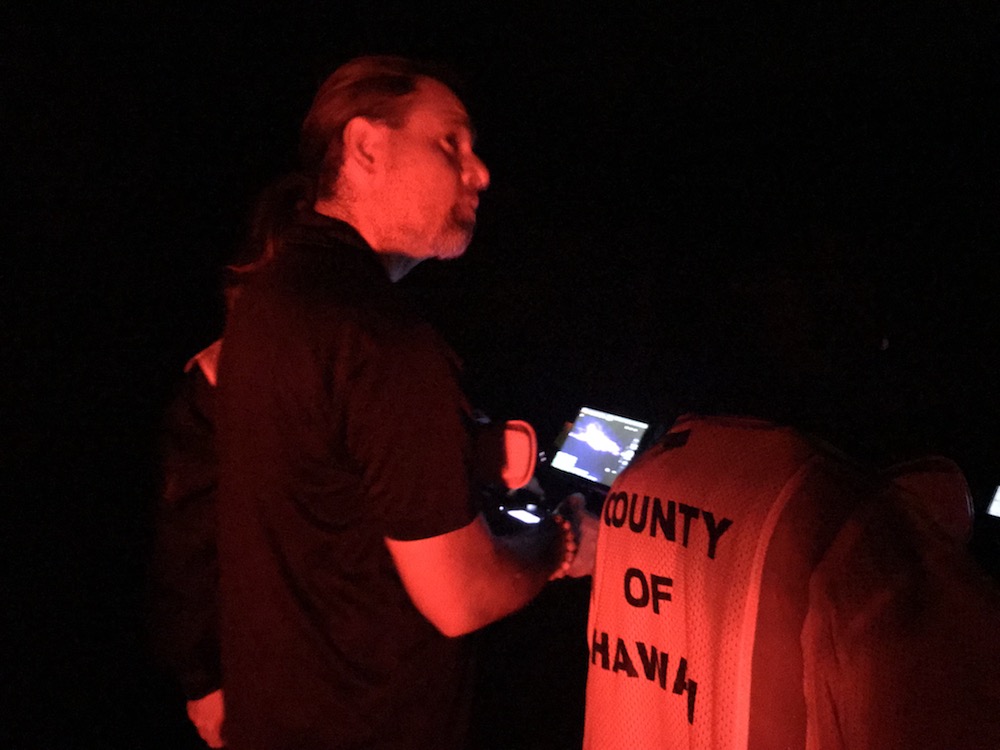

VOLUNTEER RESPONDERS USE ROBOTS AND NEW MAPPING TECHNOLOGIES TO SAVE LIVES AND PROPERTY IN HAWAII VOLCANO ERUPTION

Posted by Joan Quintana on Jun 11, 2018 at 9:56 pm America/Chicago

FOR IMMEDIATE RELEASE June 11, 2018 CONTACT: Joan Quintana email:...READ MORE

-

Back from Leilani volcanic eruption…

Posted by admin on May 24, 2018 at 3:52 pm America/Chicago

A five person volunteer team from the Center for Robot-Assisted...READ MORE

-

6 Ways volcanoes are different for flying small UAS

Posted by admin on May 11, 2018 at 10:18 am America/Chicago

We've been watching the Hawaii volcano with interest- it...READ MORE

-

Ground, Aerial, and Marine Rescue Robots of the Year Announced at National Robotics Week

Posted by admin on Apr 20, 2018 at 7:06 am America/Chicago

With the 2018 hurricane season just weeks away, the independent...READ MORE

-

Top 5 Surprises in Unmanned Systems for Mudslides

Posted by admin on Jan 12, 2018 at 10:56 am America/Chicago

[caption id="attachment_237" align="alignleft" width="300"] CRASAR's Sam Stover and Robin Murphy...READ MORE

Tags

- alpha geek

- asimov

- caterpillars

- collaboration

- cologne

- delft

- disaster reponse

- disaster response

- earthquake

- ethics

- firefighting

- ft hood

- Haiti Earthquake

- hawaii volcano

- Kobe Earthquake

- public safety

- rescue robots

- robocup

- robotics

- robotics rodeo

- snakes

- uav

- ugv

- UMV

- volcano eruption

- wired

- World Trade Center

Archives

- December 2022

- September 2018

- August 2018

- June 2018

- May 2018

- April 2018

- January 2018

- December 2017

- September 2017

- August 2017

- May 2017

- April 2017

- March 2017

- November 2016

- October 2016

- September 2016

- August 2016

- June 2016

- April 2016

- March 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- July 2014

- June 2014

- May 2014

- April 2014

- March 2014

- February 2014

- December 2013

- November 2013

- October 2013

- September 2013

- August 2013

- July 2013

- June 2013

- May 2013

- April 2013

- March 2013

- February 2013

- January 2013

- December 2012

- November 2012

- October 2012

- September 2012

- August 2012

- July 2012

- June 2012

- May 2012

- April 2012

- March 2012

- January 2012

- December 2011

- November 2011

- October 2011

- August 2011

- June 2011

- May 2011

- April 2011

- March 2011

- February 2011

- January 2011

- December 2010

- November 2010

- October 2010

- September 2010

- July 2010

- May 2010

- April 2010

- February 2010

- January 2010

- September 2009

- August 2009

- July 2009

- June 2009

- April 2009

- March 2009

Our Sponsors